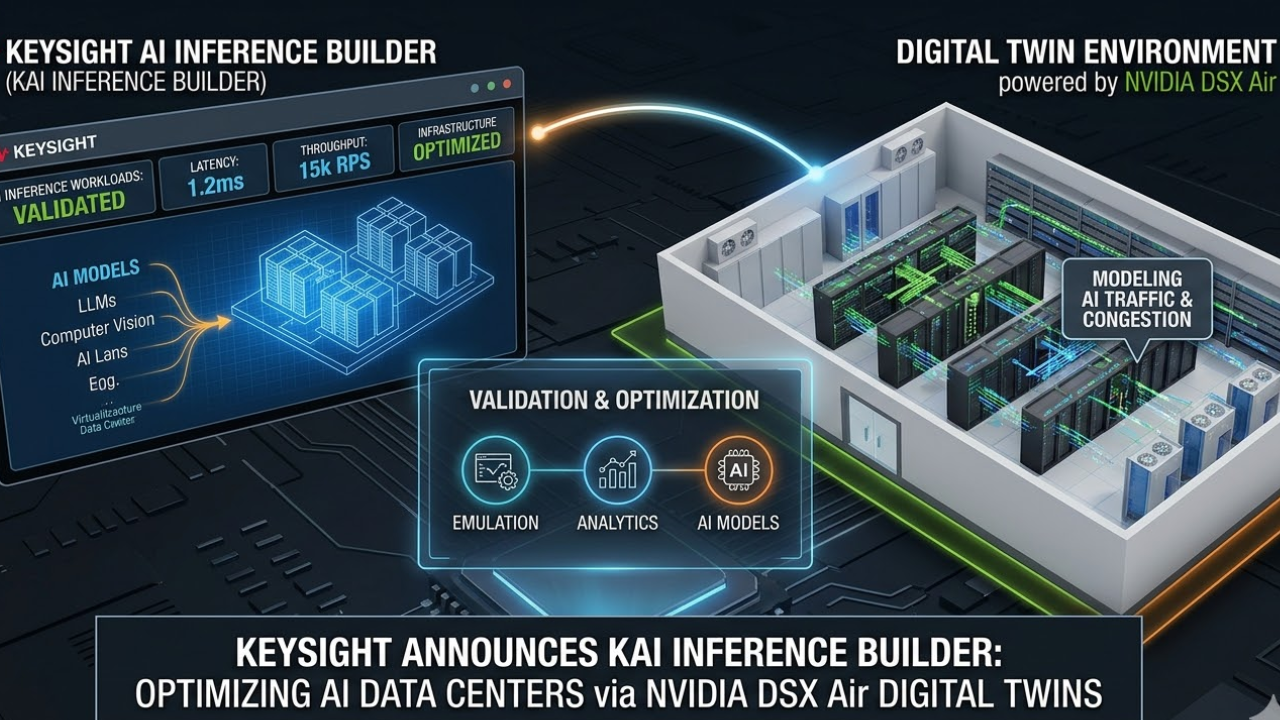

Keysight Technologies has introduced Keysight AI Inference Builder (KAI Inference Builder), an emulation and analytics platform designed to validate inference-optimised AI infrastructure at scale. Keysight will demonstrate the solution at NVIDIA GTC, showcasing operation within NVIDIA DSX Air AI factory simulation environments to model and optimise AI data centre infrastructure, architectures, and performance.

As the AI industry shifts from training large language models (LLMs) to deploying them, optimising inference has become a crucial factor for ROI. However, inference behaviour is highly dynamic and difficult to emulate. Traditional testing methods like synthetic traffic generation or GPU benchmarks cannot accurately reproduce the latency-sensitive workload behaviour of AI inferencing across compute, networking, memory, storage, and security layers.

KAI Inference Builder closes that gap by recreating realistic inference workload patterns and modelling industry-specific usage patterns to validate AI infrastructure, applications, and data centre deployments. The platform gives AI cloud providers, hardware vendors, and application developers a scalable solution for measuring, validating, and optimising real-world inference performance.

Key benefits of KAI Inference Builder include:

Built for the Inference Era: As part of the Keysight Artificial Intelligence (KAI) portfolio, KAI Inference Builder emulates AI inference workloads at scale and validates full-stack deployments under real-world conditions to optimise performance, scale, and security.

- Industry- and Application-Specific Benchmarking: Instead of generic emulations, KAI Inference Builder emulates industry-specific usage patterns and LLM architectures for AI models seen in finance, healthcare, and other verticals, enabling organisations to model and analyse infrastructure and application behaviour across different types of AI data centre deployments.

- End-to-End Validation and Optimisation: KAI Inference Builder evaluates inference workflows from user request to model response, helping teams reduce costly rework by identifying and resolving bottlenecks early across compute, network, and security layers.

- Subsystem Isolation and Root-Cause Precision: KAI Inference Builder can also do client-only emulation, which identifies where performance bottlenecks emerge across the AI infrastructure stack under load, enabling targeted optimisation that reduces overprovisioning, lowers costs, and improves overall efficiency.

- NVIDIA DSX Air Integration and Live GTC Demo: Keysight will showcase KAI Inference Builder’s turnkey integration with NVIDIA Air at NVIDIA GTC, generating realistic inference workloads throughout NVIDIA’s data centre simulation environment so operators can validate inference infrastructure before deploying physical equipment.

Ram Periakaruppan, Vice President and General Manager, Network Test & Security Solutions at Keysight, said: “Inference is the key to unlocking AI’s ROI, but that can be challenging to achieve when system resources aren’t optimised for capacity and performance. KAI Inference Builder provides visibility into real-world inference performance across the full stack, enabling customers to validate and optimise deployments before hardware reaches the rack. Showcasing this capability at NVIDIA GTC using NVIDIA’s Air platform demonstrates how organisations can accelerate the path to production while reducing risk and cost.”

Amit Katz, VP of Networking at NVIDIA, said: “As AI data centres scale to unprecedented levels, pre-deployment validation has transitioned from a best practice to a mission-critical requirement. The integration of KAI Inference Builder with NVIDIA DSX Air provides the essential environment needed to eliminate performance volatility and enables NVIDIA AI Factory partners and customers to emulate real inference workloads and preemptively resolve bottlenecks, ensuring optimised AI services reach the market quickly.”