Courtesy: Renesas

Detecting human screams for help is important in disaster relief, security, and healthcare applications. Imagine being stuck in an elevator when the usual means of communication failed. An emergency screaming detection system can recognise the distress signal and immediately activate emergency protocols, such as alerting security personnel or triggering an alarm, to efficiently get help and save lives.

Renesas’ Reality AI Emergency Scream Detection is a machine learning (ML) model designed to identify human screams. This model isn’t just about recognising any loud noise; it’s finely tuned to discern distress calls (as a scream) from background sounds. This system will enable immediate dispatch for help, especially important in enclosed or isolated environments where safety is critical.

How does Emergency Screaming Detection work?

The emergency scream detection system is trained to differentiate different audio sounds based on the data collected. The steps involved in developing this machine learning model are as follows:

- Data Collection and Training: The model’s training begins with comprehensive data collection. A public dataset including a variety of audio samples is used. The “Scream” class, featuring intense nonverbal screaming sounds and screaming with words, is used to train the emergency scream detection system. To ensure the model distinguishes what isn’t a scream, a diverse range of sounds such as wind, ambient noise, normal conversation, singing, music, and clapping is also used from the same dataset.

- Feature Extraction: The next step is to extract meaningful features from the audio files that help the model recognise scream-specific patterns amidst various noises.

- Model Training: After selecting the best feature, a machine learning classifier is trained to distinguish between “scream” and “non-scream” audios. The training process involves adjusting the model parameters to minimise errors and enhance its performance.

By using these methods, the emergency scream detection system can be built to ensure emergency responses are swift, providing a vital safeguard in various environments.

Application Example

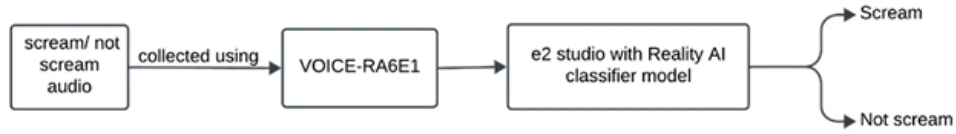

Audio signals are collected from the real-world environment to create the Renesas VOICE-RA6E1 Voice User Demonstration Kit. These audio signals are then processed by Renesas’ Reality AI-trained classifier model, which helps in distinguishing between “scream” and “non-scream” audio sounds.

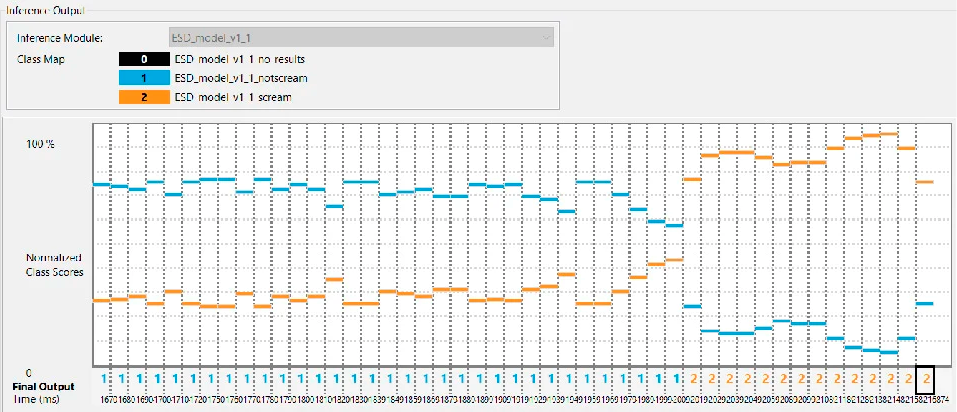

The live testing of Renesas’ Emergency Scream Detection model is benchmarked with ≥90% accuracy for screams at a maximum distance of 2 meters from the testing board. The testing conditions also included background noises such as wind, elevator music, human conversations, baby cries, and ringing phones to determine distress signals while maintaining accuracy.

Easily Build the Application Example

Users can collect audio signals with Renesas’ e² studio IDE and integrate any AI model generated from Renesas’ Reality AI software. After collecting data from a public dataset*, deploy the Reality AI software’s tools to perform feature extraction, model training, and deployment of the model to C code.

The deployed model can be integrated for live testing using the e² studio IDE. After integration, the model can be extensively tested in a live setting using the VOICE-RA6E1 board, and the live results can be visualised using the AI live monitor.

Experience the seamless and fast integration capabilities of Renesas’ Reality AI software and e² studio IDE in model training, deployment, and testing of an application.

Conclusion

The Reality AI Emergency Scream Detection application exemplifies the potential of machine learning in enhancing safety measures in various settings and demonstrates how users can employ Renesas’ technology to integrate advanced feature extraction, model training, and deployment with real-time response capabilities. The scalable Reality AI Tools can generate ML models for a wide range of Renesas MCU and MPU devices.